Best GPU Server for AI | How It Works and Use Cases 2026

Standard PCs or CPU-based workstations cannot efficiently handle workloads like machine learning, neural network training, deep learning, scientific simulations, and large-scale data analysis. These tasks require thousands of calculations to run in parallel, and only dedicated GPUs are designed to do this. This is why AI research, large language model (LLM) training, computer vision, and data-intensive workloads depend on GPU computing.

However, building new local hardware for every workload is not always practical. This is where GPU servers for AI become the best solution. GPU servers such as NVIDIA DGX systems, Supermicro GPU servers, Dell PowerEdge GPU servers, and AMD Instinct-based platforms provide multiple high-end GPUs with optimized power delivery, cooling, and memory access.

Top 10 Best GPU Servers for AI (2026)

All specifications listed below are sourced from the official websites of GPU server manufacturers. These GPU servers for AI were evaluated across different companies and workloads, and the remarks are based on the performance observed during that usage.

1. NVIDIA DGX H100

| GPU Configuration | 8× NVIDIA H100 GPUs |

| Total GPU Memory | 640 GB (combined) |

| NVLink per GPU | 18× NVIDIA® NVLink® connections |

| GPU-to-GPU Bandwidth (NVLink) | 900 GB/s bidirectional per GPU |

| NVSwitch Fabric | 4× NVIDIA NVSwitch™ |

| Total GPU Interconnect Bandwidth | 7.2 TB/s bidirectional |

| Interconnect Improvement | 1.5× higher than the previous generation |

| Network Interfaces | 10× NVIDIA ConnectX®-7 |

| Network Speed per NIC | 400 Gbps |

| Total Network Bandwidth | 1 TB/s peak bidirectional |

| CPU Configuration | Dual Intel Xeon Platinum 8480C |

| Total CPU Cores | 112 cores |

| System Memory (RAM) | 2 TB |

| Storage Type | NVMe SSD |

| Total Storage Capacity | 30 TB |

| Primary Use Case | 1.5× higher than the previous generation |

Remarks-

All-time stands as the best GPU server for AI because it performs reliably for large-scale AI training, especially in multi-node environments. GPU communication remains stable during long training runs, and performance stays consistent under sustained workloads.

2. NVIDIA DGX H200

| GPU Configuration | 8× NVIDIA H200 Tensor Core GPUs |

| GPU Memory (Per GPU) | 141GB HBM3e |

| Total GPU Memory | 1,128GB |

| AI Performance | 32 PetaFLOPS (FP8) |

| GPU Interconnect | NVIDIA NVSwitch™ (4×) |

| CPU | Dual Intel® Xeon® Platinum 8480C |

| CPU Cores | 112 Cores Total |

| CPU Frequency | 2.00 GHz (Base), up to 3.80 GHz (Max Boost) |

| System Memory (RAM) | 2TB |

| Networking (High-Speed) | 4× OSFP ports → 8× single-port NVIDIA ConnectX-7 VPI (up to 400Gb/s InfiniBand/Ethernet) |

| 2× Dual-port QSFP112 NVIDIA ConnectX-7 VPI (up to 400Gb/s InfiniBand/Ethernet) | |

| Management Networking | 10Gb/s onboard NIC (RJ45) |

| 100Gb/s Ethernet NIC | |

| Host Baseboard Management Controller (BMC) with RJ45 | |

| Operating System Storage | 2× 1.92TB NVMe M.2 |

| Internal Storage | 8× 3.84TB NVMe U.2 |

| Software Stack | NVIDIA AI Enterprise |

| NVIDIA Base Command (Orchestration & Scheduling) | |

| Supported OS | DGX OS / Ubuntu / Red Hat Enterprise Linux / Rocky Linux |

| Power Consumption | Up to 10.2kW (Standard Configuration) |

| Custom Thermal Support | Up to 14.3kW (DGX H200 CTS) |

| System Weight | 287.6 lbs (130.45 kg) |

| Packaged Weight | 376 lbs (170.45 kg) |

| Dimensions (H×W×L) | 14.0 × 19.0 × 35.3 in (356 × 482.2 × 897.1 mm) |

| Operating Temperature | 5°C – 30°C (41°F – 86°F) |

| Support | 3-Year Business-Standard Hardware & Software Support |

| Positioning | The Gold Standard for AI Infrastructure |

Remarks-

DGX H200 stands out when working with memory-intensive AI models. The HBM3e memory helps avoid data bottlenecks, allowing workloads to run smoothly even during peak utilization.

3. NVIDIA DGX B200

| GPU Setup | 8× NVIDIA Blackwell Architecture GPUs |

| Total GPU Memory | 1.44 TB HBM3e |

| Memory Bandwidth | Up to 64 TB/s aggregate |

| AI Compute Performance | FP4 Tensor Core: up to 144 PFLOPS (72 PFLOPS sustained)* |

| FP8 Tensor Core: up to 72 PFLOPS** | |

| GPU Interconnect | NVIDIA NVSwitch (2 Units) |

| NVLink Throughput | 14.4 TB/s total GPU-to-GPU bandwidth |

| CPU Configuration | Dual Intel® Xeon® Platinum 8570 Processors |

| CPU Cores | 112 Cores Combined |

| CPU Clock Speed | 2.1 GHz Base, up to 4.0 GHz Boost |

| System Memory (RAM) | 2 TB (Expandable to 4 TB) |

| High-Speed Networking | 4× OSFP ports delivering 8× single-port NVIDIA ConnectX-7 VPI |

| Supports up to 400 Gb/s InfiniBand or Ethernet | |

| Data Processing Units (DPU) | 2× Dual-port QSFP112 NVIDIA BlueField-3 |

| Management & Control Network | 10 Gb/s onboard RJ45 NIC |

| 100 Gb/s dual-port Ethernet NIC | |

| Dedicated BMC with RJ45 | |

| Boot Storage | 2× 1.9 TB NVMe M.2 (OS Drives) |

| Internal Storage | NVIDIA Mission Control with NVIDIA Run: ai |

| Software Stack | NVIDIA AI Enterprise Platform |

| NVIDIA Mission Control with NVIDIA Run:ai | |

| Supported Operating Systems | NVIDIA DGX OS / Ubuntu |

| Rack Space Required | 10 Rack Units (10RU) |

| Physical Dimensions (H×W×D) | 17.5 × 19.0 × 35.3 inches (444 × 482.2 × 897 mm) |

| Power Consumption | Approximately 14.3 kW (Maximum Load) |

| Operating Temperature Range | 10°C – 35°C (50°F – 90°F) |

| Enterprise Support | 3-Year Business-Standard Hardware & Software Coverage |

Remarks-

This system is well-suited for next-generation AI workloads. The Blackwell architecture improves efficiency when training large foundation models, particularly when using lower-precision formats like FP4 and FP8.

4. Supermicro GPU A+ Server (8× GPUs)

| Form Factor | 4U Rackmount Server |

| CPU | Dual Intel® Xeon® 6960P Processors |

| Total CPU Cores | 144 Cores (72 per CPU) |

| Base Clock Speed | 2.70 GHz |

| CPU Cache | 432 MB L3 Cache |

| Processor Power | Up to 500W per CPU |

| System Memory | 1.5 TB DDR5 ECC |

| Memory Slots | 24× DDR5 DIMM Slots |

| Memory Configuration | 24× 64 GB DDR5-6400 MHz ECC RDIMM |

| GPU Capacity | Supports up to 8× Double-Width GPUs |

| Installed GPUs | 8× NVIDIA RTX PRO 6000 Blackwell Server Edition |

| GPU Memory (Per GPU) | 96 GB GDDR7 |

| Total GPU Memory | 768 GB |

| GPU Power Consumption | 600W per GPU |

| NVMe Drive Bays | 8× E3.S NVMe Hot-Swap Bays |

| Boot Storage | 2× 1.92 TB E3.S NVMe PCIe 5.0 SED |

| High-Capacity Storage | 4× 7.68 TB E3.S NVMe PCIe 5.0 SED |

| M.2 Slots | 2× NVMe M.2 |

| Onboard Networking | 2× 10 GbE RJ45 |

| AIOM Adapter | NVIDIA ConnectX-6 LX 25 GbE (2× SFP28) |

| High-Speed NICs | 4× NVIDIA ConnectX-6 Dx 100 GbE (QSFP56) |

| Expansion Architecture | Optimized for multi-GPU AI clusters and high-throughput workloads |

Remarks-

The main advantage of this server is its flexibility. It allows easy customization of GPUs and networking, making it a practical choice for both research environments and enterprise AI deployments.

5. Dell PowerEdge XE9785

| Processor (CPU) | Dual 5th Gen AMD EPYC™ 9005 Series |

| Max CPU Cores | Up to 384 cores total (192 cores per processor) |

| Operating Systems | Ubuntu Server LTS, Red Hat Enterprise Linux |

| Accelerator Options | • 8× AMD Instinct™ MI355X (288 GB each, OAM, Infinity Fabric) • 8× NVIDIA HGX B300 NVL8 (270 GB each, SXM6, NVLink) |

| GPU Interconnect | AMD Infinity Fabric (MI355X) / NVIDIA NVLink (B300) |

| GPU Power Rating | MI355X: 1400W per GPU B300: 1100W per GPU |

| System Memory Type | DDR5 RDIMM |

| Memory Speed | Up to 6400 MT/s |

| Memory Slots | 24× DDR5 DIMM |

| Maximum System RAM | Up to 6 TB |

| Front NVMe Bays | Up to 16× E3.S NVMe (max 245.76 TB) |

| U.2 NVMe Support | Up to 10× Gen5 U.2 NVMe (max 153.6 TB) |

| Boot Storage | NVMe BOSS-N1 (2× M.2 SSDs, HW RAID 1) |

| Security Features | AMD SEV & SME, Secure Boot, TPM 2.0, Silicon Root of Trust, Firmware Signing, SED Encryption, Secure Erase, Chassis Intrusion Detection |

| System Management | iDRAC10, iDRAC Direct, Redfish API, iDRAC Service Module |

| OpenManage Software | OpenManage Enterprise, Power Manager, Update Manager, Service Plugin, CloudIQ |

| Automation & Tools | Dell System Update, IPMI, RACADM CLI, Red Hat Ansible, Terraform Providers |

| Network Interface Options | 1× OCP 3.0 (Gen5 x8 PCIe lanes) |

| Embedded OSFP Ports | MI355X: Not Applicable B300: 8× CX8 OSFP (default) |

| Front I/O Ports | USB-C (iDRAC Direct), 2× RJ45 iDRAC, USB-A, Mini-DisplayPort |

| PCIe Expansion | MI355X: 12× Gen5 x16 (75W FHHL) B300: 4× Gen5 x16 (150W FHHL) |

| Power Supplies | 12× 3200W Titanium, hot-swap, redundant (200–240 VAC) |

| Cooling System | 15 hot-swap GPU fans + 5 cold-swap CPU fans |

| Form Factor | 10U Rackmount Server |

| Optional Accessories | Front security bezel |

| Dimensions (H×W×D) | 17.30″ × 18.98″ × 41.12″ (with bezel) |

| System Weight | MI355X: 163.6 kg (360.7 lbs) B300: 156.0 kg (343.9 lbs) |

Remarks-

This server offers strong scalability and management capabilities. The option to choose between AMD and NVIDIA accelerators makes it adaptable to different workload requirements.

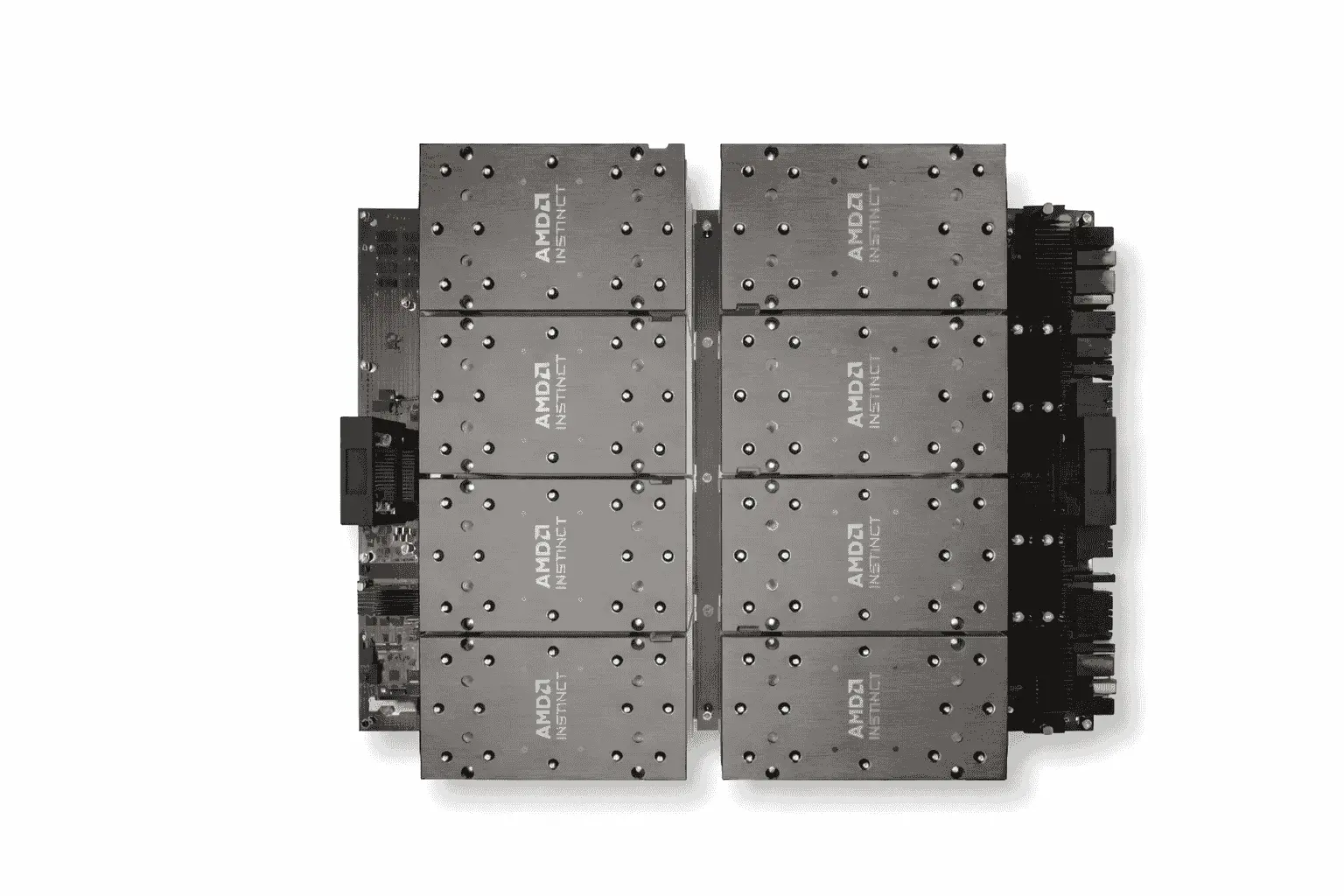

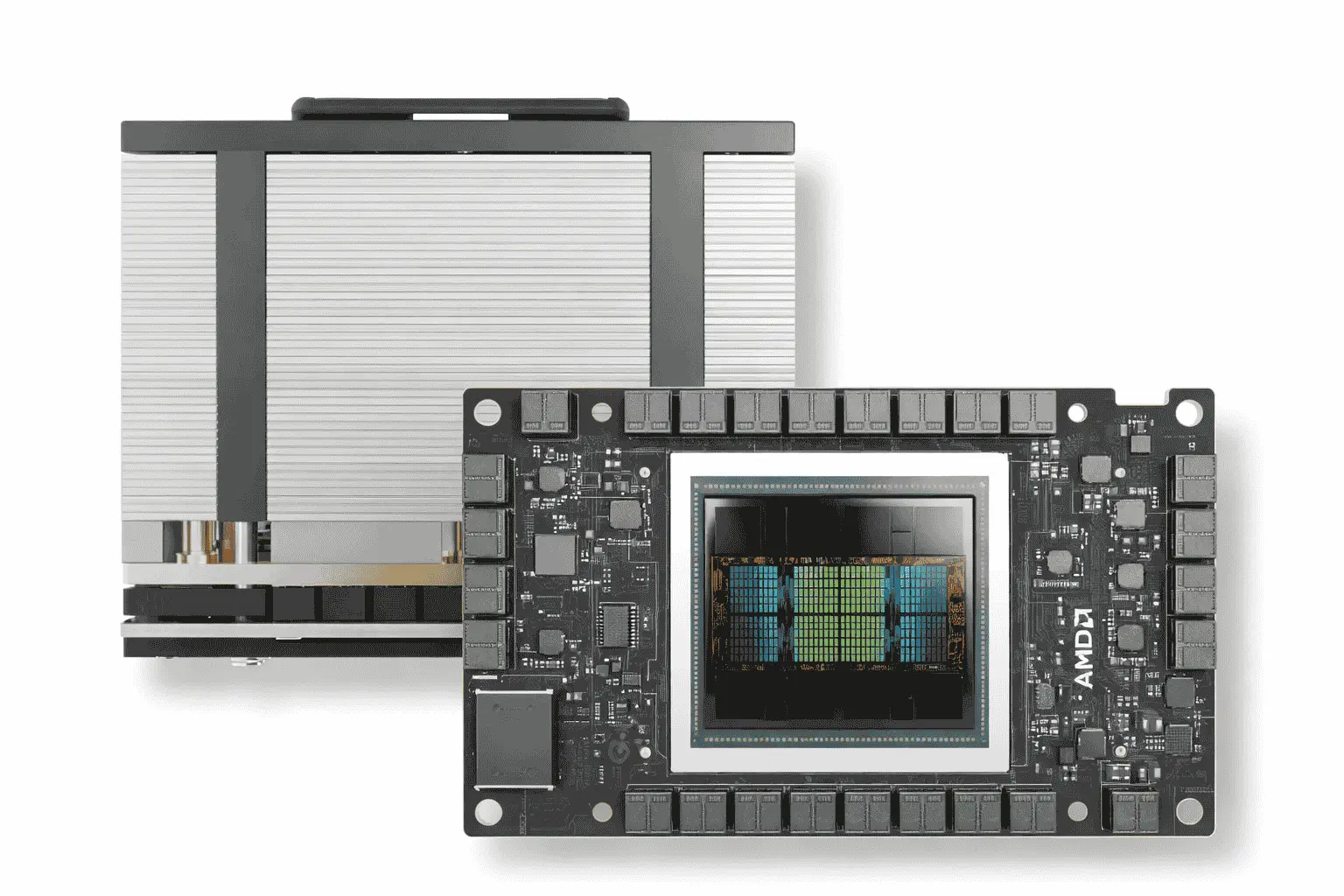

6. AMD Instinct MI300X

| Series | Instinct MI300 Series |

| Form Factor | Instinct Platform (UBB 2.0) |

| GPUs | 8x Instinct MI300X OAM |

| Dimensions | 417mm x 553mm |

| Launch Date | 12/06/2023 |

| Total Memory | 1.5TB HBM3 |

| Memory Bandwidth | 5.3 TB/s per OAM |

| Infinity Architecture | 4th Generation |

| Bus Type | PCIe® Gen 5 (128 GB/s) |

| Aggregate Bandwidth | 896 GB/s |

| Warranty | 3 Year Limited |

| AI Performance (FP8) | 20.9 PFLOPs (41.8 PFLOPs with Structured Sparsity) |

| AI Performance (TF32) | 5.2 PFLOPs (10.5 PFLOPs with Structured Sparsity) |

| AI Performance (FP16) | 10.5 PFLOPs (20.9 PFLOPs with Structured Sparsity) |

| AI Performance (bfloat16) | 10.5 PFLOPs (20.9 PFLOPs with Structured Sparsity) |

| AI Performance (INT8) | 20.9 POPs (41.8 POPs with Structured Sparsity) |

| HPC Performance (FP64 Matrix) | 1.3 PFLOPs |

| HPC Performance (FP64) | 653.6 TFLOPs |

| HPC (FP32 Matrix & FP32) | 1.3 PFLOPs |

Remarks-

Best GPU server for AI models where memory capacity is critical. The high-bandwidth HBM3 memory supports efficient model training and inference at scale.

7. NVIDIA Grace Hopper (GH200)

| Architecture | Grace CPU + Hopper GPU |

| Memory Type | HBM3 / HBM3e GPU Memory |

| Memory Bandwidth | 900 GB/s NVLink-C2C (7x PCIe Gen5 speed) |

| CPU Cores | 72 Arm-based Grace CPU cores |

| GPU Performance | Up to 4 PFLOPs (Hopper GPU) |

| Superchip Coherent Memory | CPU + GPU share per-process page table |

| NVLink | NVLink-C2C for CPU-GPU coherence |

| GH200 NVL2 Memory | 288 GB high-bandwidth memory, 1.2 TB fast memory |

| GH200 NVL2 Memory Bandwidth | 10 TB/s |

| Target Applications | AI, HPC, Scientific Compute, Data Processing, Retrieval-Augmented Generation, Graph Neural Networks |

| Performance Highlights | Scientific Compute: 200 exaflops combined (supercomputers) Data Processing: up to 36X CPU speedup RAG Embedding: up to 30X speedup GNN Training: up to 8X faster than H100 PCIe |

| Software Compatibility | AI, HPC, Scientific Computing, Data Processing, Retrieval-Augmented Generation, Graph Neural Networks |

Remarks-

The tight integration between the CPU and GPU delivers noticeable performance improvements for AI inference and data processing. It performs especially well for retrieval-augmented generation and graph-based workloads.

8. Lambda Hyperplane GPU Server

Lambda Hyperplane is a powerful GPU server system made for heavy computing work. It combines many high‑end GPUs (such as NVIDIA H100) into a single machine. These GPUs are connected with fast links, so they can work together without slowing down.

This server has powerful CPUs and ample memory, making it suitable for demanding tasks such as large‑scale model training, simulation, or scientific computing, where multiple GPUs need to communicate quickly.

9. Hetzner Dedicated GPU Server

Hetzner offers dedicated physical servers that include a GPU with high video memory (VRAM). A dedicated server means you get the entire machine to yourself; you install and manage everything on it. These servers are good for:

10. RunPod Community Cloud

RunPod Community Cloud is an on-demand GPU rental service that allows users to access high-performance GPU nodes without owning physical hardware. Users can choose from various GPU types and launch instances whenever needed, paying only for the time the resources are in use. These servers are commonly recommended for workloads such as:

Also read: GPU Temperature Range | Safe, Ideal, & Tolerance Limits 2026

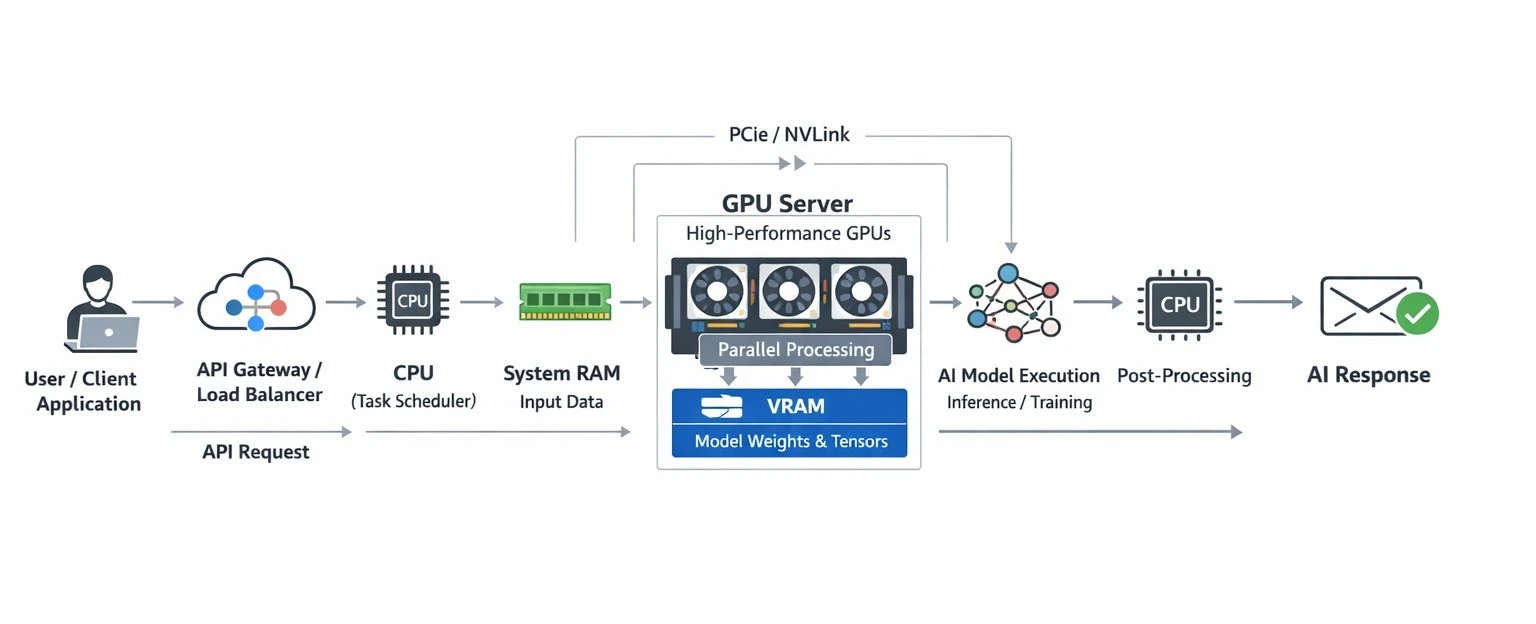

Working Mechanism of GPU Servers in AI

Core Architecture of a GPU Server

The core architecture of a GPU server for AI is designed to deliver high-performance computing by combining the power of CPUs, GPUs, memory, and high-speed interconnects into a single system. This design allows GPU servers to efficiently process complex workloads such as artificial intelligence, machine learning, deep learning, scientific simulations, and large-scale data analytics.

Memory and scalability are key pillars of GPU server architecture. GPUs use high-bandwidth VRAM to store large datasets and models close to the processing cores, which significantly speeds up computation. Along with system RAM and NVMe-based storage, this layered memory structure enables smooth data flow across the server.

Many GPU servers support multiple GPUs in a single chassis, allowing workloads to be distributed for faster processing and better efficiency. Advanced cooling systems, power optimization, and software frameworks such as CUDA, GPU drivers, and virtualization technologies ensure stable performance, making GPU servers the backbone of modern cloud computing and AI-driven applications.

Parallel Processing Mechanism

The work of machine learning and neural networks mostly depends on matrix multiplication and vector operations. A CPU processes this type of work sequentially, which becomes very slow when dealing with large datasets.

A GPU server solves this problem through parallel processing. A GPU contains thousands of small compute cores that can apply the same operation to different parts of the data simultaneously. The AI workload is divided into small batches, and each batch is processed simultaneously across multiple GPU cores. Because of this, a GPU server reduces training time from hours or days to minutes or hours.

Task Distribution Between CPU and GPU

The CPU focuses on decision-based operations such as managing application flow, preparing datasets, and controlling execution order, while continuously monitoring system performance. When a workload involves large-scale mathematical repetition, the CPU intelligently assigns it to the GPU.

The GPU specializes in executing parallel numerical computations, including matrix multiplications, tensor transformations, and backpropagation calculations used in AI models.

This separation ensures that control-intensive processes remain with the CPU, while computation-heavy tasks are accelerated by the GPU, resulting in faster processing, reduced execution time, and optimized utilization of server resources.

Interconnects and Data Flow

Interfaces like PCIe and NVLink connect GPUs directly with the CPU and with each other. These links make data sharing between GPUs faster, which is essential for multi-GPU training.

When an AI model is being trained across multiple GPUs, each GPU processes its own portion, and then the results are synchronized. High-bandwidth interconnects make this synchronization smooth, so that data bottlenecks are avoided, and training remains stable and consistent.

GPU Server Use Cases In 2026

AI & Machine Learning Companies

GPU servers for AI are used for large-scale model training and production deployment. In these environments, systems such as NVIDIA DGX H100, DGX H200, and HGX B200–based servers are commonly deployed because they are optimized for multi-GPU parallel training.

These servers are used to train large language models (LLMs), vision transformers, recommendation systems, and multimodal models, where high VRAM capacity, NVLink interconnects, and fast tensor computation are required. GPU servers support distributed training, fine-tuning, and real-time inference pipelines in production environments.

Data Science & Data Analytics Firms

Data analytics firms use GPU servers to accelerate computation on massive datasets. In this domain, NVIDIA L40S servers, RTX 6000 Ada–based systems, and A10 Tensor Core GPU servers are widely used.

These GPU servers handle feature engineering, large-scale statistical modeling, graph analytics, and predictive simulations, where CPU-based clusters often become bottlenecks due to limited memory bandwidth and compute throughput. GPUs efficiently execute data-parallel operations in these workflows.

Healthcare & Medical Research Organizations

In healthcare and biomedical research, GPU servers play a role in medical imaging and scientific computation. Institutions commonly deploy NVIDIA DGX H100, AMD Instinct MI300X platforms, and Supermicro multi-GPU servers.

These servers are used for MRI and CT image segmentation, AI-assisted diagnostics, drug discovery simulations, and genome sequencing analysis. High-memory GPUs and accurate FP16/FP32 compute performance are essential for these workloads.

Autonomous Vehicles & Robotics Companies

Autonomous systems rely on GPU servers for sensor data training and large-scale simulation environments. This sector commonly uses NVIDIA Grace Hopper (GH200) systems, HGX-based GPU servers, and RTX 6000 Ada clusters.

GPU servers are used to train computer vision models, sensor fusion networks, path planning algorithms, and reinforcement learning systems. These training workloads simulate real-world driving and robotics scenarios that CPU-based systems cannot efficiently process.

Media, Entertainment & Animation Studios

Media and VFX studios deploy GPU servers as render farms and simulation pipelines. Typical setups include multi-GPU RTX 4090 servers, RTX 6000 Ada systems, and L40S inference servers.

These GPU servers handle ray tracing, physically based rendering (PBR), particle simulations, and high-resolution video encoding, where GPU parallelism directly reduces render times from hours to minutes.

Scientific Research & High-Performance Computing (HPC)

Scientific research environments use GPU servers for numerical simulations and computational modeling. Common platforms include HPE Cray GPU clusters, Supermicro liquid-cooled rack-scale GPU systems, and AMD MI300X platforms.

These systems run climate modeling, molecular dynamics, astrophysics simulations, and physics-based solvers, executing thousands of calculations in parallel and significantly shortening research timelines.

Cloud Service Providers & AI Platforms

Cloud providers offer GPU servers as on-demand AI infrastructure. Typical offerings include DGX-based instances, H100 and L40S GPU nodes, and RTX 4090 cloud servers.

These platforms are used for scalable AI training environments, inference APIs, and temporary high-compute workloads, allowing companies to access enterprise-grade GPU performance without maintaining their own hardware.

Evaluate Before Buying a GPU Server for AI

Conclusion

GPU servers are the backbone of modern AI infrastructure because they combine parallel processing, high-bandwidth memory, and optimized data pipelines to solve problems that traditional CPU-based systems simply cannot handle at scale. From faster model training and inference to efficient handling of massive datasets, their architecture is purpose-built for performance, reliability, and scalability.

That is why we have recommended the best GPU servers for AI in this guide, so you can choose the right solution according to your workload and build an AI infrastructure with confidence.